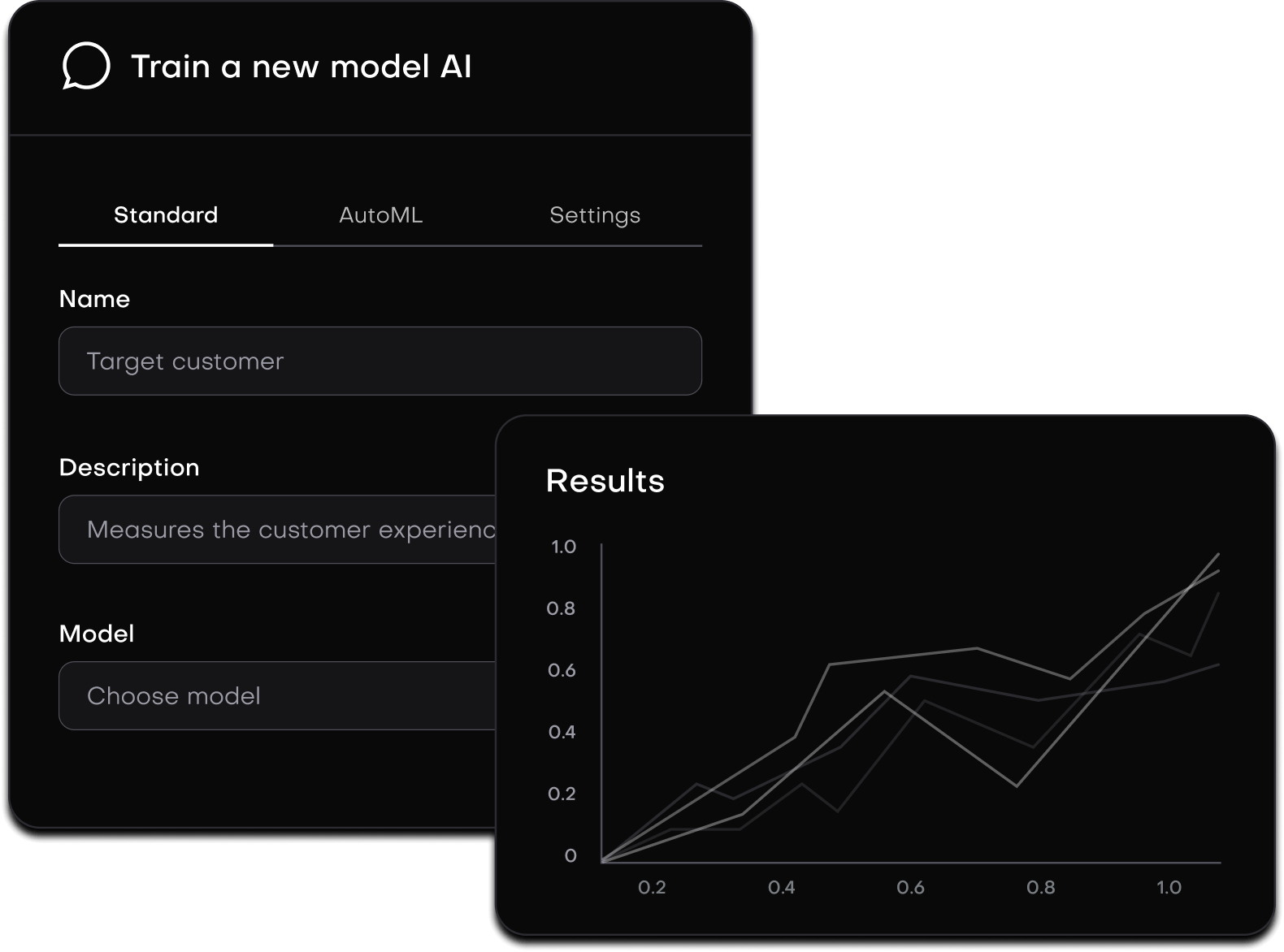

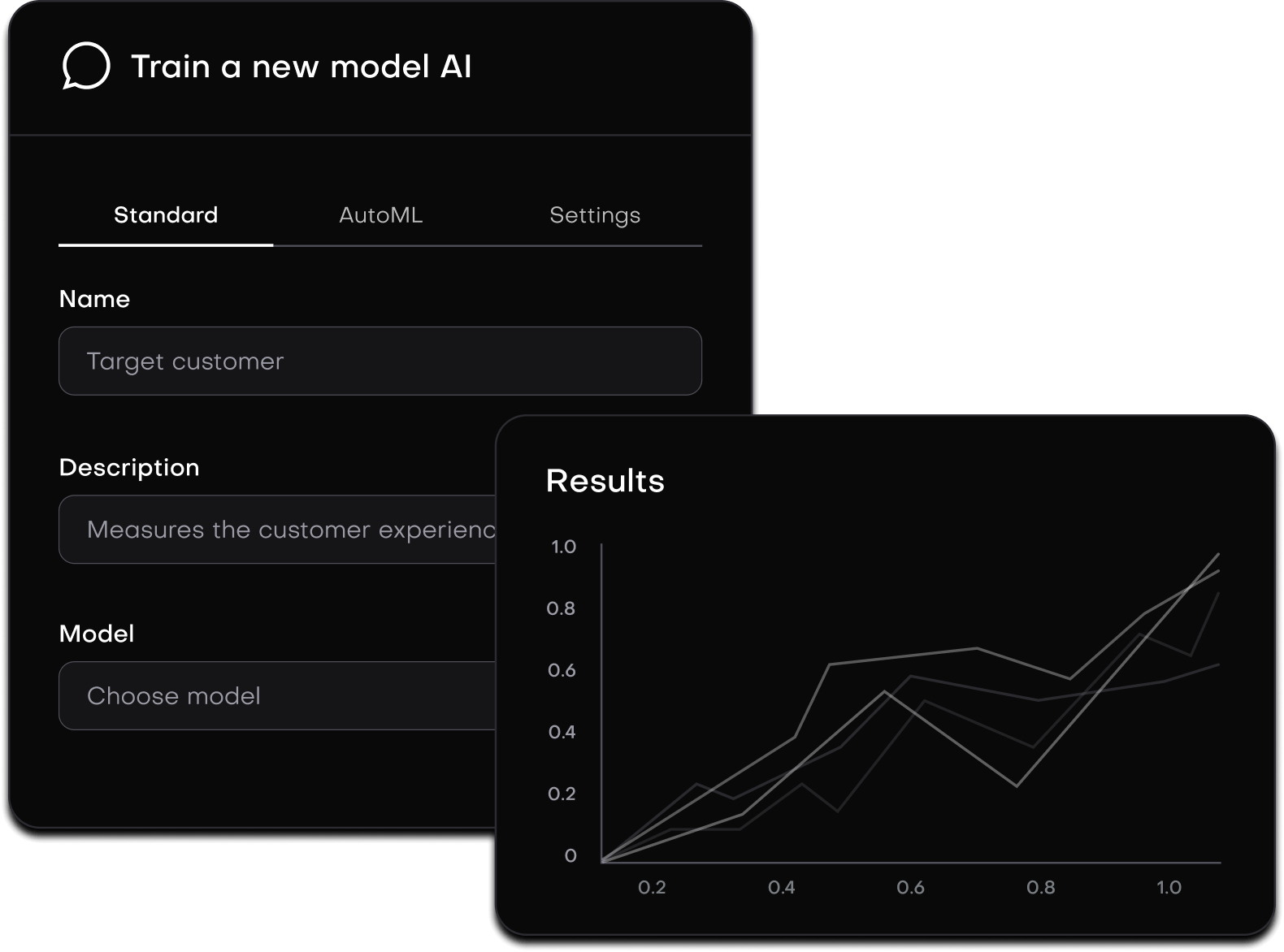

Train, deploy, scale, and maintain generative AI models, in a safe and intuitive environment

Train, deploy, scale, and maintain generative AI models, in a safe and intuitive environment.

Seamless training, deployment, and optimisation for generative AI transformation.

Elit pellentesque pretium vitae euismod magna non quis nibh faucibus egestas quis malesuada. Egestas nisl pulvinar maecenas rutrum. Odio id interdum sit tristique.

Pre-trained OS model collection supports fine-tuned models with enterprise-specific data, enabling you to choose any model tailored to your individual use cases

Automated annotation

Smart labels

Comprehensive, secure, and responsive file interactions, including on-premise embeddings and vector databases, alleviate data privacy concerns

Sentiment analysis

Language processing

Automated resource adjustments to cope with peak loads, assuring uninterrupted application performance

Automated annotation

Smart labels

Word-by-word output streaming and dynamic request batching to ensure a smooth API integration with strict standard compliance

Sentiment analysis

Language processing

Massa proin morbi malesuada et facilisis felis leo. Nulla pretium magna dictum sed lorem.

Tristique aliquam pellentesque mi non etiam morbi. Mi risus ipsum sed et sed est et. Lacus.

Non sed massa praesent enim amet lacus nisl. Libero vitae consectetur id tellus quis cursus.

Efficient infrastructure accelerates the path from model development to production, ensuring faster and more streamlined deployment.

Cost-effective LLM scaling through intelligent resource management and auto-scaling, suitable for organizations of any size.

Data privacy and security are our top priorities. We offer enterprise-grade security features to safeguard your data and models.

Avoid any vendor lock-in using our multi-cloud orchestration for smooth cross-cloud operation. We can deploy directly to your cloud for full compliance and risk mitigation.

We enable direct deployment in your private cloud infrastructure, including AWS, GCP, or Azure. For strict security requirements, we can create a secure gov-cloud environment to meet elevated security standards

While our model may not outperform GPT-4 in all computational tasks, it excels in specific niches. Unlike GPT-4, known for its versatility in applications like Claude or Bard in conversational AI, our model specializes in fine-tuning for tasks such as Insurance, Finance, Enterprise Data Management, Legal frameworks, and tailored corporate needs like Customer Support Services and Code Assistance, delivering enhanced efficiency and results in these domains

This aligns our API's architecture with OpenAI's, ensuring a smooth transition for users migrating from OpenAI. Our compatibility minimizes the need for modifications to existing integrations, making it easy to work with OpenAI's established protocols, function calls, and data handling methods

We aim to provide a fast and tailored onboarding experience for open-source LLMs, typically taking just a few days, depending on your data size and specific needs, as well as the readiness and complexity of your infrastructure

Our system's versatility arises from its integration with LlamaIndex, expanding our support range. If a data source lacks an open-source connector, we promise custom development to seamlessly integrate your unique data streams

Our innovative technology ensures data currency through monitoring CRUD operations or a one-time data encoding process, reducing unnecessary processing costs for efficient data management

We accommodate a diverse array of LLMs, including but not limited to Azure OpenAI, OpenAI, Anthropic, Bard, and LLama. Our platform is compatible with any specified inference endpoints

Leveraging the foundational structure of the llama2 70B model, we extended its pre-training phase, incorporating and experimenting with methodologies established by Chen et al & YaRN. This strategic enhancement is aimed at expanding the model's context comprehension capabilities, significantly extending its original 4K token limit to 32K tokens.

Our product is designed for developers and enterprises using LLMs in specialized applications. It improves AI precision and relevance for sector-specific solutions.

We strictly protect user data, never retaining or reusing it for model improvement to maintain confidentiality and compliance with data protection regulations in AI

Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur sint occaecat cupidatat non proident, sunt in culpa qui.

Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur sint occaecat.

Sentiment analysis

Language translation

Language processing

Content moderation

Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur sint occaecat.

Color histogram

Shape features

Texture features

Object detection